【tracking.js】前端人脸识别框架 tracking.js 活体检测/拍照在 vue2 的使用-程序员宅基地

技术标签: Vue 前端 vue.js javascript 开发语言 ecmascript

Tracking.js 是一个独立的JavaScript库,用于跟踪从相机实时收到的数据。跟踪的数据既可以是颜色,也可以是人,也就是说我们可以通过检测到某特定颜色,或者检测一个人体/脸的出现与移动,来触发JavaScript 事件。它是非常易于使用的API,具有数个方法和事件(足够使用了)。

做项目要用到活体检测和拍照的

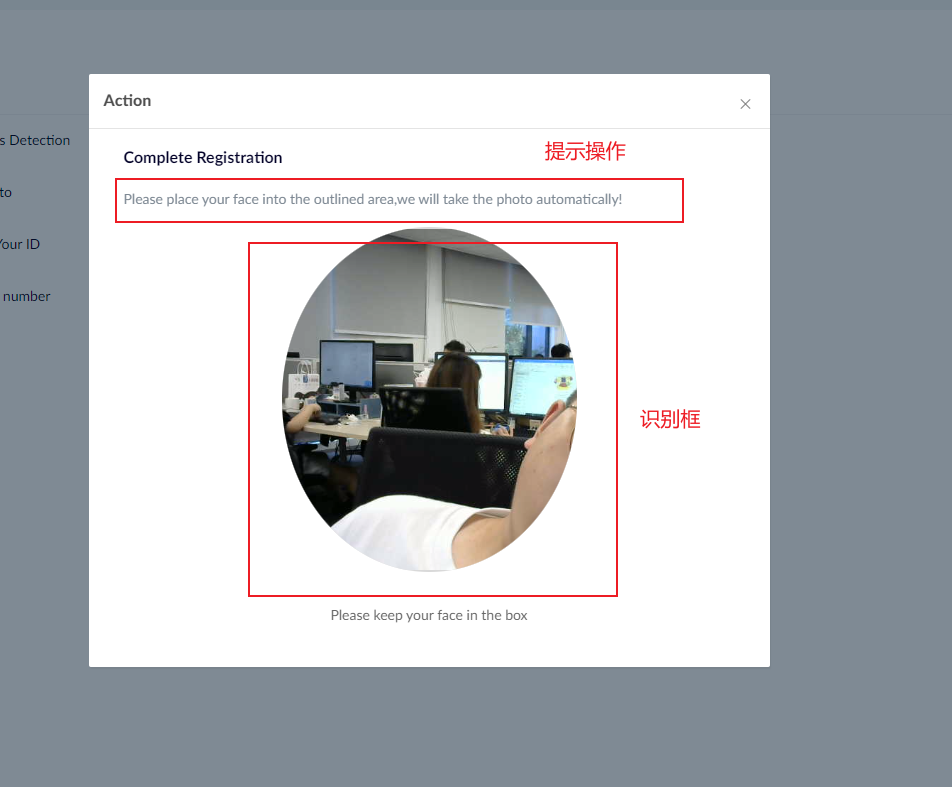

实现效果

活体检测组件

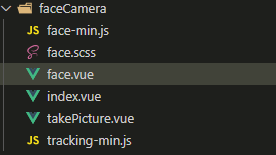

包需到下载 tracking-min.js 和 face-min.js 压缩文件,自行百度。

<template>

<el-dialog

:visible="modalVisible"

width="681px"

custom-class="compaines-dialog"

title="Complete Registration"

class="face-dialog"

v-dialogDrag

:close-on-click-modal="false"

@close="close"

:append-to-body="true"

>

<div class="face" v-loading="faceloading">

<p class="big-title">Liveness detection</p>

<!-- <p v-if="steps === 'open' || steps === 'screen'">

Please place your face into the outlined area,we will take the photo

automatically!

</p> -->

<p v-if="steps === 'open' || steps === 'screen'">

{

{ faceTips[imgList.length] }}

</p>

<div

v-if="steps === 'screen' && imgList.length > 0"

style="text-align: center; font-size: 30px"

>

<svg-icon :iconClass="faceLocation[imgList.length]" />

</div>

<p v-if="steps === 'end'">

<span v-if="faceOk" class="success">Successful!</span>

<span v-else class="fail">Failed!</span>

</p>

<div class="video-container" v-show="steps !== 'end'">

<video

id="video"

preload

autoplay

loop

muted

width="295"

height="345"

:style="reverse ? 'transform:rotateY(180deg);' : ''"

></video>

<canvas id="canvas" width="295" height="345"></canvas>

<canvas

id="shortCut"

width="295"

height="345"

style="opacity: 0"

></canvas>

<canvas

id="canvas1"

width="295"

height="345"

style="opacity: 0"

></canvas>

<i @click="reverseVideo" v-show="steps === 'screen'">

<svg-icon iconClass="icon-camera" class="icon-camera" />

</i>

</div>

<div v-show="steps === 'end'" style="text-align: center">

<img v-if="faceOk" src="@/icons/img/face-success.png" alt="" />

<img v-else src="@/icons/img/face-fail.png" alt="" />

</div>

<div class="btns">

<div class="add-num w300" @click="start" v-if="steps === 'open'">

TURN CAMERA ON

</div>

<div

class="retake-btn w300"

style="margin-right: 0"

@click="close"

v-if="steps === 'screen'"

>

TURN CAMERA OFF

</div>

<div

class="retake-btn w300"

@click="tryAgain"

v-if="steps === 'end' && !faceOk"

>

TRY AGAIN

</div>

<div

class="add-num w300"

@click="finish"

v-if="steps === 'end' && faceOk"

>

FINISH

</div>

<p class="tips" v-if="steps === 'screen'">

(To complete the liveness detection your camera must remain on)

</p>

</div>

<div class="imgs" v-show="false">

<p>未保存图片</p>

<p>已保存图片</p>

<div id="img"></div>

</div>

</div>

</el-dialog>

</template>

<script>

import './tracking-min.js'

import './face-min.js'

import { livenessCheck } from '@modules/kyc/api/system/system'

import debounce from 'lodash/debounce'

export default {

name: 'testTracking',

model: {

prop: 'formData',

event: 'input',

},

props: {

modalVisible: {

type: Boolean,

default: false,

},

formData: {

type: Object | String,

},

option: {

type: String,

default: '',

},

},

data() {

return {

saveArray: {},

imgView: false,

timer: true,

steps: 'open',

imgList: [],

faceTips: [

'Please place your face into the outlined area,we will take the photo automatically!',

'Look to the left',

'Look to the right',

'Tilt your head up',

'Tilt your head down',

],

faceLocation: [

'',

'arrow-left',

'arrow-right',

'arrow-up',

'arrow-down',

'',

],

faceloading: false,

faceOk: false,

startPhoto: false,

reverse: true,

}

},

created() {

this.start = debounce(this.start, 500)

},

methods: {

// 打开摄像头

start() {

window.navigator.mediaDevices

.getUserMedia({

video: true,

})

.then((stream) => {

this.openVideo()

})

.catch((err) => {

this.$message.error('Cannot capture user camera')

})

},

openVideo() {

let that = this

this.steps = 'screen'

this.startPhoto = true

let canvas = document.getElementById('canvas')

let context = canvas.getContext('2d')

let tracker = new tracking.ObjectTracker('face')

tracker.setInitialScale(4)

tracker.setStepSize(2)

tracker.setEdgesDensity(0.1)

this.trackerTask = tracking.track('#video', tracker, { camera: true })

tracker.on('track', function (event) {

context.clearRect(0, 0, canvas.width, canvas.height)

event.data.forEach(function (rect) {

if (that.reverse) {

context.strokeRect(

295 - rect.x - rect.width,

rect.y,

rect.width,

rect.height

)

} else {

context.strokeRect(rect.x, rect.y, rect.width, rect.height)

}

context.strokeStyle = '#fff'

context.fillStyle = '#fff'

that.saveArray.x = rect.x

that.saveArray.y = rect.y

that.saveArray.width = rect.width

that.saveArray.height = rect.height

})

})

this.timer = true

this.setPhotoInterval()

},

setPhotoInterval() {

const countFun = () => {

setTimeout(() => {

if (this.timer && this.startPhoto) {

countFun()

if (this.reverse) {

if (

this.saveArray.x < 150 &&

this.saveArray.y < 150 &&

this.saveArray.width > 150 &&

this.saveArray.height > 150

) {

this.getPhoto()

}

} else {

if (

295 - this.saveArray.x - this.saveArray.width < 150 &&

this.saveArray.y < 150 &&

this.saveArray.width > 150 &&

this.saveArray.height > 150

) {

this.getPhoto()

}

}

}

}, 1000)

}

countFun()

},

// 获取人像照片

getPhoto() {

try {

let video = document.getElementById('video')

let cut = document.getElementById('shortCut')

let context2 = cut.getContext('2d')

context2.drawImage(video, 0, 0, 295, 345)

this.keepImg()

} catch (error) {}

},

// 将canvas转化为图片

convertCanvasToImage(canvas) {

let image = new Image()

image.src = canvas.toDataURL('image/png')

return image

},

//将base64转换为文件,dataurl为base64字符串,filename为文件名(必须带后缀名,如.jpg,.png)

dataURLtoFile(dataurl, filename) {

let arr = dataurl.split(','),

mime = arr[0].match(/:(.*?);/)[1],

bstr = atob(arr[1]),

n = bstr.length,

u8arr = new Uint8Array(n)

while (n--) {

u8arr[n] = bstr.charCodeAt(n)

}

return new File([u8arr], filename, { type: mime })

},

// 保存图片

keepImg() {

//先保存完整的截图

let cut = document.getElementById('shortCut')

let context = cut.getContext('2d')

//从完整截图里面,截取左侧294x345大小的图片,添加到canvas1里面

let imgData = context.getImageData(0, 0, 294, 345)

let canvas1 = document.getElementById('canvas1')

let context1 = canvas1.getContext('2d')

context1.putImageData(imgData, 0, 0)

let img = document.getElementById('img')

//把canvas1里面的294x345大小的图片保存

let photoImg = document.createElement('img')

photoImg.src = this.convertCanvasToImage(canvas1).src

img.appendChild(photoImg)

this.imgList.push(

this.dataURLtoFile(

this.convertCanvasToImage(canvas1).src,

`person${this.imgList.length}.jpg`

)

)

this.timer = false

//捕捉成功后停顿3秒,再捕捉下一张,捕捉5张后上传文件

if (this.imgList.length === 5) {

this.sendImages()

} else {

setTimeout(() => {

this.timer = true

this.setPhotoInterval()

}, 3000)

}

},

sendImages() {

let formData = new FormData()

this.imgList.forEach((item) => {

formData.append('files', item)

})

this.faceloading = true

formData.append('actionId', this.formData.id)

livenessCheck(formData, { isUpload: true })

.then((res) => {

if (res.success) {

this.faceOk = true

} else {

this.faceOk = false

}

this.faceloading = false

this.steps = 'end'

})

.catch((err) => {

this.openVideo()

this.faceloading = false

this.imgList = []

})

},

tryAgain() {

this.imgList = []

this.openVideo()

},

finish() {

this.closeFace()

this.$emit('Liveness check success')

this.$emit('close', 'success')

},

clearCanvas() {

let c = document.getElementById('canvas')

let c1 = document.getElementById('canvas1')

let cxt = c.getContext('2d')

let cxt1 = c1.getContext('2d')

cxt.clearRect(0, 0, 581, 436)

cxt1.clearRect(0, 0, 581, 436)

},

closeFace() {

try {

this.startPhoto = false

this.timer = false

this.imgList = []

this.clearCanvas()

// 关闭摄像头

let video = document.getElementById('video')

video.srcObject.getTracks()[0].stop()

// 停止侦测

this.trackerTask.stop()

} catch (error) {}

},

close() {

this.closeFace()

if(this.faceOk){

this.$emit('close', 'success')

}else{

this.$emit('close')

}

},

reverseVideo() {

this.reverse = !this.reverse

},

},

watch: {},

}

</script>

<style lang="scss">

@import './face.scss';

</style>拍照组件代码

点击查看拍照组件代码<template>

<el-dialog

:visible="modalVisible"

width="681px"

custom-class="compaines-dialog"

title="Complete Registration"

class="face-dialog"

@close="close"

>

<div class="face" v-loading="faceloading">

<p class="big-title" v-if="steps !== 'save'">Take a profile picture</p>

<p class="big-title success" v-if="steps === 'save'">Complete!</p>

<p v-if="steps === 'open'">

Please turn on your camera and center your face within the below guides.

</p>

<p v-if="steps === 'take'">Position your face within the outlined area,click the button and the image will be saved as your profile picture!</p>

<p v-if="steps === 'save'">

Happy with your picture? If not, you can take another one.

</p>

<div class="video-container">

<video

id="video"

preload

autoplay

loop

muted

width="295"

height="345"

:style="reverse ? 'transform:rotateY(180deg);' : ''"

></video>

<canvas id="canvas" width="295" height="345"></canvas>

<canvas id="shortCut" v-show="false"></canvas>

<img :src="imgSrc" alt="" v-show="steps === 'save'" />

<i @click="reverseVideo" v-show="steps==='take'">

<svg-icon iconClass="icon-camera" class="icon-camera" />

</i>

</div>

<div class="btns">

<div class="add-num w300" @click="start" v-if="steps === 'open'">

TURN CAMERA ON

</div>

<div class="add-num w300" @click="getPhoto" v-if="steps === 'take'">

SAVE THE IMAGE

</div>

<div class="retake-btn" @click="openVideo" v-if="steps === 'save'">

RETAKE

</div>

<div class="add-num" @click="keepImg" v-if="steps === 'save'">

USE PICTURE

</div>

<p class="tips" v-if="steps === 'take'">

(To take a profile picture your camera must remain on)

</p>

<div class="imgs" v-show="false">

<canvas

id="canvas1"

width="295"

height="345"

v-show="steps === 'save'"

></canvas>

<p>save images</p>

<div id="img"></div>

</div>

</div>

</div>

</el-dialog>

</template>

<script>

import './tracking-min.js'

import './face-min.js'

import { individualPhoto } from '@modules/kyc/api/system/system'

import debounce from 'lodash/debounce'

export default {

name: 'testTracking',

model: {

prop: 'formData',

event: 'input',

},

props: {

modalVisible: {

type: Boolean,

default: false,

},

formData: {

type: Object,

},

option: {

type: String,

default: '',

},

},

data() {

return {

saveArray: {},

imgView: false,

timer: null,

steps: 'open',

imageFile: {},

faceloading: false,

btnLoading: false,

imgSrc: '',

reverse: true,

}

},

created() {

this.start = debounce(this.start, 500)

},

methods: {

// 打开摄像头

start() {

window.navigator.mediaDevices

.getUserMedia({

video: true,

})

.then((stream) => {

this.openVideo()

})

.catch((err) => {

this.$message.error('Cannot capture user camera')

})

},

openVideo() {

let that = this

this.steps = 'take'

let saveArray = {}

let canvas = document.getElementById('canvas')

let context = canvas.getContext('2d')

let tracker = new tracking.ObjectTracker('face')

tracker.setInitialScale(4)

tracker.setStepSize(1.5)

tracker.setEdgesDensity(0.1)

this.imgSrc = ''

this.trackerTask = tracking.track('#video', tracker, { camera: true })

tracker.on('track', function (event) {

context.clearRect(0, 0, canvas.width, canvas.height)

event.data.forEach(function (rect) {

if (that.reverse) {

context.strokeRect(

295 - rect.x - rect.width,

rect.y,

rect.width,

rect.height

)

} else {

context.strokeRect(rect.x, rect.y, rect.width, rect.height)

}

context.strokeStyle = '#fff'

context.fillStyle = '#fff'

saveArray.x = rect.x

saveArray.y = rect.y

saveArray.width = rect.width

saveArray.height = rect.height

})

})

},

// 获取人像照片

getPhoto() {

let video = document.getElementById('video')

let can = document.getElementById('shortCut')

can.width = video.videoWidth

can.height = video.videoHeight

let context2 = can.getContext('2d')

if (this.reverse) {

context2.scale(-1, 1)

context2.translate(-video.videoWidth, 0)

}

context2.drawImage(video, 0, 0, video.videoWidth, video.videoHeight)

this.imgSrc = this.convertCanvasToImage(can).src

this.steps = 'save'

this.clearCanvas()

// 停止侦测

this.trackerTask.stop()

// 关闭摄像头

video.srcObject.getTracks()[0].stop()

// this.imgView = true

},

// 截屏

screenshot() {

this.getPhoto()

},

// 将canvas转化为图片

convertCanvasToImage(canvas) {

let image = new Image()

image.src = canvas.toDataURL('image/png')

return image

},

//将base64转换为文件,dataurl为base64字符串,filename为文件名(必须带后缀名,如.jpg,.png)

dataURLtoFile(dataurl, filename) {

let arr = dataurl.split(','),

mime = arr[0].match(/:(.*?);/)[1],

bstr = atob(arr[1]),

n = bstr.length,

u8arr = new Uint8Array(n)

while (n--) {

u8arr[n] = bstr.charCodeAt(n)

}

return new File([u8arr], filename, { type: mime })

},

// 保存图片

keepImg() {

// let can = document.getElementById('shortCut')

// let context = can.getContext('2d')

// let imgData = context.getImageData(0, 0, 294, 345)

// let canvas1 = document.getElementById('canvas1')

// let context1 = canvas1.getContext('2d')

// context1.putImageData(imgData, 0, 0)

// let img = document.getElementById('img')

// let photoImg = document.createElement('img')

// photoImg.src = this.convertCanvasToImage(canvas1).src

// img.appendChild(photoImg)

// this.imageFile = this.dataURLtoFile(

// this.convertCanvasToImage(canvas1).src,

// 'person.jpg'

// )

this.imageFile = this.dataURLtoFile(this.imgSrc, 'person.jpg')

let formData = new FormData()

formData.append('file', this.imageFile)

this.faceloading = true

individualPhoto(formData, { isUpload: true })

.then((res) => {

if (res.success) {

this.faceloading = false

this.closeFace()

this.$emit('close', 'success')

this.$store

.dispatch('GetBusinessUser')

.then((res) => {})

.catch((err) => {})

} else {

this.openVideo()

this.faceloading = false

this.imgList = []

}

})

.catch((err) => {

this.openVideo()

this.faceloading = false

this.imgList = []

})

},

clearCanvas() {

let c = document.getElementById('canvas')

let c1 = document.getElementById('canvas1')

let cxt = c.getContext('2d')

let cxt1 = c1.getContext('2d')

cxt.clearRect(0, 0, 581, 436)

cxt1.clearRect(0, 0, 581, 436)

},

closeFace() {

try {

this.steps = 'open'

this.clearCanvas()

// 关闭摄像头

let video = document.getElementById('video')

video.srcObject.getTracks()[0].stop()

// 停止侦测

this.trackerTask.stop()

} catch (error) {}

},

close() {

this.closeFace()

this.$emit('close')

},

reverseVideo() {

this.reverse = !this.reverse

},

},

watch: {

faceView(v) {

if (v == false) {

this.closeFace()

}

},

},

destroyed() {

// clearInterval(this.timer)

},

}

</script>

<style lang="scss">

@import './face.scss';

</style>样式文件

点击查看样式代码.face {

p {

text-align: center;

font-size: 16px;

color: #333333;

}

.big-title {

margin-top: 36PX;

font-size: 24PX;

font-weight: 600;

color: #030229;

text-align: center;

}

.success {

color: #47BFAF;

}

.fail {

color: #C81223;

}

.video-container {

background: url(~@/icons/img/face.png);

background-size: cover;

position: relative;

width: 295PX;

height: 345PX;

border-radius: 4%;

overflow: hidden;

margin: 0 auto;

margin-top: 34PX;

img,

video,

#canvas,

#shortCut,

#canvas1 {

position: absolute;

}

img {

width: 100%;

height: 100%;

object-fit: cover;

}

video{

object-fit: cover;

}

.icon-camera{

position: absolute;

font-size: 32px;

bottom: 20px;

right: 20px;

cursor: pointer;

}

}

.btns {

padding: 10PX;

text-align: center;

margin-top: 6PX;

.tips {

font-size: 14PX;

color: #666;

margin-top: 16PX;

line-height: 24PX;

}

}

.imgs {

padding: 10PX;

p {

font-size: 16PX;

}

}

.add-num {

display: inline-block;

font-size: 16PX;

padding: 12PX 14PX;

margin-right: 4PX;

border-radius: 4PX;

color: #ffffff;

background: #47BFAF;

cursor: pointer;

}

.retake-btn {

width: 145PX;

display: inline-block;

font-size: 16PX;

padding: 12PX 14PX;

margin-right: 24PX;

border-radius: 4PX;

color: #828282;

background: #E7E7E7;

cursor: pointer;

}

.w300 {

width: 300PX;

}

}

.face-dialog {

.el-dialog__body {

padding-top: 0;

padding-bottom: 15px;

}

}智能推荐

稀疏编码的数学基础与理论分析-程序员宅基地

文章浏览阅读290次,点赞8次,收藏10次。1.背景介绍稀疏编码是一种用于处理稀疏数据的编码技术,其主要应用于信息传输、存储和处理等领域。稀疏数据是指数据中大部分元素为零或近似于零的数据,例如文本、图像、音频、视频等。稀疏编码的核心思想是将稀疏数据表示为非零元素和它们对应的位置信息,从而减少存储空间和计算复杂度。稀疏编码的研究起源于1990年代,随着大数据时代的到来,稀疏编码技术的应用范围和影响力不断扩大。目前,稀疏编码已经成为计算...

EasyGBS国标流媒体服务器GB28181国标方案安装使用文档-程序员宅基地

文章浏览阅读217次。EasyGBS - GB28181 国标方案安装使用文档下载安装包下载,正式使用需商业授权, 功能一致在线演示在线API架构图EasySIPCMSSIP 中心信令服务, 单节点, 自带一个 Redis Server, 随 EasySIPCMS 自启动, 不需要手动运行EasySIPSMSSIP 流媒体服务, 根..._easygbs-windows-2.6.0-23042316使用文档

【Web】记录巅峰极客2023 BabyURL题目复现——Jackson原生链_原生jackson 反序列化链子-程序员宅基地

文章浏览阅读1.2k次,点赞27次,收藏7次。2023巅峰极客 BabyURL之前AliyunCTF Bypassit I这题考查了这样一条链子:其实就是Jackson的原生反序列化利用今天复现的这题也是大同小异,一起来整一下。_原生jackson 反序列化链子

一文搞懂SpringCloud,详解干货,做好笔记_spring cloud-程序员宅基地

文章浏览阅读734次,点赞9次,收藏7次。微服务架构简单的说就是将单体应用进一步拆分,拆分成更小的服务,每个服务都是一个可以独立运行的项目。这么多小服务,如何管理他们?(服务治理 注册中心[服务注册 发现 剔除])这么多小服务,他们之间如何通讯?这么多小服务,客户端怎么访问他们?(网关)这么多小服务,一旦出现问题了,应该如何自处理?(容错)这么多小服务,一旦出现问题了,应该如何排错?(链路追踪)对于上面的问题,是任何一个微服务设计者都不能绕过去的,因此大部分的微服务产品都针对每一个问题提供了相应的组件来解决它们。_spring cloud

Js实现图片点击切换与轮播-程序员宅基地

文章浏览阅读5.9k次,点赞6次,收藏20次。Js实现图片点击切换与轮播图片点击切换<!DOCTYPE html><html> <head> <meta charset="UTF-8"> <title></title> <script type="text/ja..._点击图片进行轮播图切换

tensorflow-gpu版本安装教程(过程详细)_tensorflow gpu版本安装-程序员宅基地

文章浏览阅读10w+次,点赞245次,收藏1.5k次。在开始安装前,如果你的电脑装过tensorflow,请先把他们卸载干净,包括依赖的包(tensorflow-estimator、tensorboard、tensorflow、keras-applications、keras-preprocessing),不然后续安装了tensorflow-gpu可能会出现找不到cuda的问题。cuda、cudnn。..._tensorflow gpu版本安装

随便推点

物联网时代 权限滥用漏洞的攻击及防御-程序员宅基地

文章浏览阅读243次。0x00 简介权限滥用漏洞一般归类于逻辑问题,是指服务端功能开放过多或权限限制不严格,导致攻击者可以通过直接或间接调用的方式达到攻击效果。随着物联网时代的到来,这种漏洞已经屡见不鲜,各种漏洞组合利用也是千奇百怪、五花八门,这里总结漏洞是为了更好地应对和预防,如有不妥之处还请业内人士多多指教。0x01 背景2014年4月,在比特币飞涨的时代某网站曾经..._使用物联网漏洞的使用者

Visual Odometry and Depth Calculation--Epipolar Geometry--Direct Method--PnP_normalized plane coordinates-程序员宅基地

文章浏览阅读786次。A. Epipolar geometry and triangulationThe epipolar geometry mainly adopts the feature point method, such as SIFT, SURF and ORB, etc. to obtain the feature points corresponding to two frames of images. As shown in Figure 1, let the first image be and th_normalized plane coordinates

开放信息抽取(OIE)系统(三)-- 第二代开放信息抽取系统(人工规则, rule-based, 先抽取关系)_语义角色增强的关系抽取-程序员宅基地

文章浏览阅读708次,点赞2次,收藏3次。开放信息抽取(OIE)系统(三)-- 第二代开放信息抽取系统(人工规则, rule-based, 先关系再实体)一.第二代开放信息抽取系统背景 第一代开放信息抽取系统(Open Information Extraction, OIE, learning-based, 自学习, 先抽取实体)通常抽取大量冗余信息,为了消除这些冗余信息,诞生了第二代开放信息抽取系统。二.第二代开放信息抽取系统历史第二代开放信息抽取系统着眼于解决第一代系统的三大问题: 大量非信息性提取(即省略关键信息的提取)、_语义角色增强的关系抽取

10个顶尖响应式HTML5网页_html欢迎页面-程序员宅基地

文章浏览阅读1.1w次,点赞6次,收藏51次。快速完成网页设计,10个顶尖响应式HTML5网页模板助你一臂之力为了寻找一个优质的网页模板,网页设计师和开发者往往可能会花上大半天的时间。不过幸运的是,现在的网页设计师和开发人员已经开始共享HTML5,Bootstrap和CSS3中的免费网页模板资源。鉴于网站模板的灵活性和强大的功能,现在广大设计师和开发者对html5网站的实际需求日益增长。为了造福大众,Mockplus的小伙伴整理了2018年最..._html欢迎页面

计算机二级 考试科目,2018全国计算机等级考试调整,一、二级都增加了考试科目...-程序员宅基地

文章浏览阅读282次。原标题:2018全国计算机等级考试调整,一、二级都增加了考试科目全国计算机等级考试将于9月15-17日举行。在备考的最后冲刺阶段,小编为大家整理了今年新公布的全国计算机等级考试调整方案,希望对备考的小伙伴有所帮助,快随小编往下看吧!从2018年3月开始,全国计算机等级考试实施2018版考试大纲,并按新体系开考各个考试级别。具体调整内容如下:一、考试级别及科目1.一级新增“网络安全素质教育”科目(代..._计算机二级增报科目什么意思

conan简单使用_apt install conan-程序员宅基地

文章浏览阅读240次。conan简单使用。_apt install conan